Hyper Flow 971991551 Neural Node

The Hyper Flow 971991551 Neural Node presents a modular, high-throughput component for adaptive data routing. It combines probabilistic inference with differentiable optimization to enable end-to-end learning under uncertainty. The design emphasizes scalable deployment across edge and cloud resources and strives for interpretable, emergent behavior. Its real-world viability depends on robust performance, resilience, and clear explainability across heterogeneous environments, inviting scrutiny of deployment strategies and evaluation metrics as next steps.

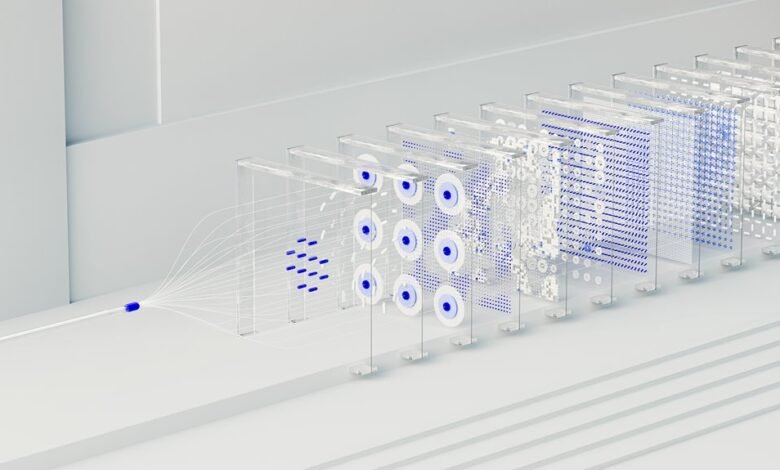

What Is the Hyper Flow 971991551 Neural Node?

The Hyper Flow 971991551 Neural Node is a conceptual component within advanced network architectures that combines high-throughput data processing with adaptive routing capabilities. It presents a hypothetical overview of data paths and decision logic, emphasizing modular design and scalable connectivity.

Neural integration is analyzed as an emergent property, where coordinated subsystems align to sustain efficient, flexible computation across heterogeneous environments.

How Hybrid Probabilistic and Differentiable Architecture Works

Hybrid probabilistic and differentiable architectures combine stochastic inference with gradient-based optimization to enable end-to-end learning under uncertain, complex environments. They balance sampling-based uncertainty with differentiable modules, enabling scalable training.

A hybrid probabilistic framework models latent variables while a differentiable architecture preserves backpropagation paths. This collaboration yields robust adaptability, selective inference, and transparent optimization dynamics for the Hyper Flow node.

Real-World Edge and Cloud Deployments: Use Cases and Benefits

Edge and cloud deployments enable scalable, low-latency inference and centralized model management across diverse operational contexts.

Real-world use cases demonstrate distributed inference, offline training synchronization, and policy-driven updates, preserving autonomy while ensuring governance.

Benefits include reduced edge latency and streamlined model deployment, enabling resilient operations, adaptive workloads, and secure multi-site collaboration without sacrificing control or transparency.

Evaluating Performance, Explainability, and Resilience

Model interpretability is assessed through stable explanations, reproducible metrics, and traceable decision paths.

Resilience evaluation confirms fault tolerance, recovery speed, and graceful degradation, yielding actionable, objective conclusions for informed governance and design refinement.

Conclusion

The Hyper Flow 971991551 Neural Node stands as an audacious fusion of throughput and adaptability, redefining how data paths reconfigure in real time. Its hybrid probabilistic and differentiable core enables near-instant, gradient-guided decisions while maintaining stochastic robustness. In edge and cloud ecosystems, deployments become exceptionally scalable, interpretable, and resilient, with emergent routing mirroring living systems. The architecture’s precision and methodological rigor suggest a transformative standard for future modular neural nodes—astonishingly capable, reliably explainable, and relentlessly efficient.